@Furler Very nice - thank you for sharing that!

For what it’s worth, here’s one version of the code I ended up with. It prints the side lengths of the smallest 15 Heron triangles in the sequence. (I had a more elaborate version that involved setting up a class for working with elements of \mathbf Z[(1 + \sqrt{85}) / 2], but it’s really overkill to write your own class for this when packages like SymPy exist.)

import math

def issquare(n: int) -> bool:

"""Return True if n is a perfect square, else False."""

return n >= 0 and (a := math.isqrt(n)) * a == n

# Find all pairs (A, B) with A*A - 85*B*B = -85 * 37^2

# such that 37√85 <= A + B√85 < 37√85(285769 + 30996√85).

triangles = [

(85 * B, math.isqrt(37 * 37 + 85 * B * B))

for B in range(37 * 30996)

if issquare(37 * 37 + 85 * B * B)

]

# Cycle through, multiplying each pair by the multiplier and

# placing it back on the queue.

for _ in range(15):

A, B = triangles.pop(0)

a, b, c = 37, (B - 3) // 2, (B + 3) // 2

print((a, b, c))

triangles.append((285769 * A + 85 * 30996 * B, 30996 * A + 285769 * B))

Output:

(37, 17, 20)

(37, 1105, 1108)

(37, 44197, 44200)

(37, 5286725, 5286728)

(37, 632362597, 632362600)

(37, 25261121185, 25261121188)

(37, 3021565090337, 3021565090340)

(37, 361419254820385, 361419254820388)

(37, 14437690680645637, 14437690680645640)

(37, 1726939268596598885, 1726939268596598888)

(37, 206564838060901696837, 206564838060901696840)

(37, 8251688856209585815825, 8251688856209585815828)

(37, 987011415692141369302097, 987011415692141369302100)

(37, 118059654415290214752862225, 118059654415290214752862228)

(37, 4716153745485876567325200517, 4716153745485876567325200520)

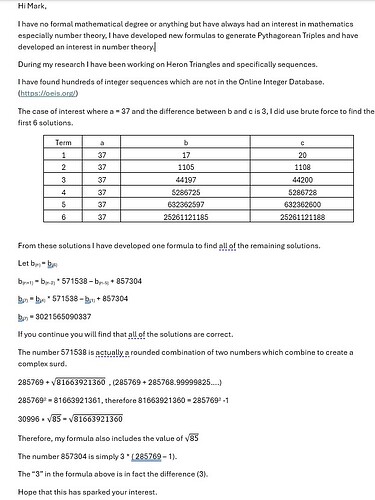

Explanation (which is much longer than the code): as before, we recast the problem in terms of finding pairs (A, B) of nonnegative integers satisfying A^2 - 85B^2 = -85\cdot 37^2. I’ll call those pairs “solution pairs”. Here A is the area of the Heron triangle, and B = 2b + 3, so the side lengths of the triangle are 37, (B - 3)/2 and (B + 3)/2.

Every solution pair (A, B) corresponds to a real number A + B\sqrt{85} that’s greater than or equal to 37\sqrt{85} (in the usual ordering of the real numbers).

Now the key observation is that if the real number A + B\sqrt{85} comes from a solution pair, then so does the product (A + B\sqrt{85})(285769 + 30996\sqrt{85}) (this is easily verified just by multiplying everything out). Furthermore, we can go backwards, too: if A + B\sqrt{85} corresponds to a solution pair, then so does the quotient (A + \sqrt{85}B)/(285769 + 30996\sqrt{85}), provided only that that quotient is still greater than or equal to 37\sqrt{85}.

So the solutions naturally fall into a collection of (three, as it turns out) geometric progressions, each with multiplier 285769 + 30996\sqrt{85}: every solution is uniquely of the form (A + B\sqrt{85})(285769 + 30996\sqrt{85})^k for some nonnegative integer k and some solution pair (A, B) for which 37\sqrt{85} \le A + B√85 < 37\sqrt{85}\cdot(285769 + 30996\sqrt{85}).

The code above simply does a brute force search to find all solution pairs (A, B) such that A + B\sqrt{85} lies in the interval [37\sqrt{85}, 37\sqrt{85}\cdot(285769 + 30996\sqrt{85})). It then repeatedly pops the smallest solution from the front of its list, prints it, multiplies it by the multiplier to get the next largest solution, and pushes that back to the end of the list. The brute force search part is very quick (it takes around a tenth of a second on my laptop), and finds exactly three solutions. There’s a little bit of sleight of hand there - we make use of the fact that if A^2 - 85 B^2 = -85\cdot 37^2 then A must be divisible by 85, so we actually search for all pairs (A, B) such that A^2 - 85B^2 = -37^2 and A + B\sqrt{85} lies in the interval [37, 37\cdot(285769 + 30996\sqrt{85})), and then scale the corresponding numbers A + B\sqrt{85} up by a factor of \sqrt{85}.

As to where the magic multiplier m = 285769 + 30996\sqrt{85} comes from: it’s the smallest norm-1 unit that’s larger than 1 in \mathbf Z[\sqrt{85}]. It can be found either (1) as the sixth power of the fundamental unit (9 + \sqrt{85})/2 that I mentioned in my last post, or (2) via a very quick brute force search: 30996 is the smallest positive B for which 1 + 85B^2 is a square, and then 285769 is the square root of that square.

>>> next(B for B in itertools.count(1) if issquare(1 + 85*B*B))

30996

>>> math.isqrt(1 + 85*B*B)

285769

And of course it’s closely related to the value 571538 that appears in your recurrence.

>>> 285769 + 30996 * sqrt(85)

571537.9999982503

In fact, your recurrence is coming from the fact that m exactly satisfies the quadratic equation m^2 = 571538m - 1: if (A_i, B_i) and (A_{i+1}, B_{i+1}) are two successive solution pairs in one of our three families, then the next solution pair is (A_{i+2}, B_{i+2}) = (571538 A_{i+1} - A_i, 571538 B_{i+1} - B_i).

Nothing special: home laptop (MacBook Pro, M4 Pro CPU), and CPython 3.13.5 as installed by uv.