The problem

A common pattern is dispatching to different behavior based on an enum member. Two approaches dominate:

if/elif (or match/case):

- Dispatch logic is coupled away from the enum definition

- Adding a member requires editing every dispatch site

- O(n) sequential scan - cost grows with member position

- Exception messages and logging require per-branch repetition

dict of callables:

- O(1), but the constant becomes a bare

str- no type safety, no IDE completion - Mistyping

"New"instead of"NEW"silently falls through - The callable is still separated from the constant it belongs to

Neither approach co-locates the constant with its behavior or provides O(1) dispatch with full enum semantics.

Proposed solution: BehaviorEnum

A new Enum subclass where each member pairs a constant value with a callable, accessible via a do attribute:

from enum import BehaviorEnum

class TaskStatus(BehaviorEnum):

NEW = "new", lambda: print("Starting task...")

IN_PROGRESS = "in_progress", lambda: print("Already running.")

COMPLETED = "completed", lambda: print("Nothing to do.")

TaskStatus.NEW.value # "new"

TaskStatus.NEW.do() # Starting task...

TaskStatus("new").do() # lookup by value + dispatch - O(1)

The callable lives on the member; dispatch is O(1) via attribute access; value and behavior are co-located at definition time.

A secondary benefit: BehaviorEnum.__new__ validates the callable at class-creation time, so you cannot define a member without wiring up its handler. Incomplete dispatch becomes a definition-time error rather than a runtime surprise.

Implementation

The implementation is intentionally minimal:

class BehaviorEnum(Enum):

"""Enum where each member bundles a constant value with a callable behavior."""

def __new__(cls, value, do):

if not callable(do):

raise TypeError('%r is not callable' % (do,))

obj = object.__new__(cls)

obj._value_ = value

obj.do = do

return obj

- No metaclass override required

- Regular methods can coexist with members

- Members are picklable across all protocols (restored by value lookup; the callable is never pickled)

- Aliases, iteration,

__repr__, and functional creation syntax all work as expected

Relationship to prior proposals

This space has been discussed before. The following summarises the relevant prior art and how this proposal differs.

2017: “Callable Enum values” (python-ideas)

Stephan Hoyer proposed a CallableEnum where def FOO(): inside the class body would become a member (python-ideas, April 2017 - thread). It was rejected - correctly - because bare function definitions in a class body trigger the descriptor protocol, requiring a metaclass override that prevents defining regular methods on the same enum.

BehaviorEnum avoids this entirely. Members use tuple syntax (NAME = value, callable), which is not subject to the descriptor protocol. No metaclass override is needed.

2019–2021: Callable values closed as “not a bug”

Two bug reports (python/cpython#82556, python/cpython#89820) about callable enum values were closed as “not a bug” - the behavior is intentional. BehaviorEnum does not change that behavior; it sidesteps it via tuple syntax.

functools.partial workaround

The community workaround was wrapping callables in functools.partial. In Python 3.13, partial gained __get__ (python/cpython#121027), breaking this workaround (python/cpython#125316). The officially recommended replacement is @enum.member (3.11+), but that doesn’t provide a named do attribute, O(1) dispatch semantics, or a reusable base class.

aenum

Ethan Furman’s aenum library extends the enum module with additional types (AutoNumberEnum, OrderedEnum, UniqueEnum, etc.) and is the destination core developers have historically pointed people toward for enum-adjacent patterns. It does not provide a CallableEnum or equivalent - the specific dispatch-with-callable use case has no existing home there, in aenum or in the stdlib.

Prior CallableEnum proposals |

BehaviorEnum |

|

|---|---|---|

| Member syntax | def FOO(): ... (descriptor conflict) |

FOO = value, callable (tuple, no conflict) |

| Value access | Value is the callable | Separate value and do attributes |

| Dispatch | member() or member.value() |

member.do(...) |

| Metaclass override needed | Yes | No |

| Regular methods allowed | No | Yes |

Performance

Methodology

Three dispatch strategies were benchmarked across all 100 member positions of a 100-member enum:

Enum + match/case- standardmatchstatementDict + lambda-dictkeyed by string, values are callablesBehaviorEnum-.do()call directly on the member

Each position was timed with timeit (5 000 iterations × 7 repeats, minimum taken) to suppress scheduler noise. Statistical testing used a Kruskal-Wallis H-test (non-parametric) followed by Dunn post-hoc with Bonferroni correction. Complexity was confirmed via OLS linear regression of runtime vs. position.

Results

| Method | Mean (µs) | Complexity |

|---|---|---|

| BehaviorEnum | 0.0353 | O(1) |

| Dict + lambda | 0.0386 | O(1) |

| Enum + match/case | 0.7633 | O(n) |

All pairwise differences are statistically significant (Kruskal-Wallis H = 1667.76, p ≈ 0).

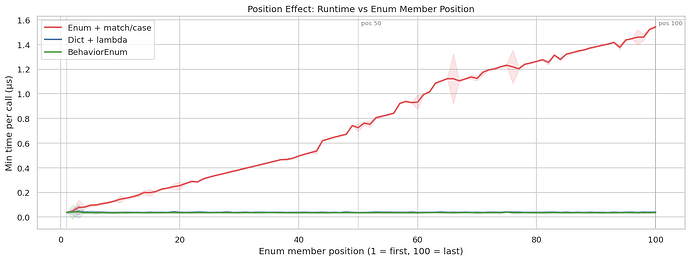

The chart below plots runtime vs. member position continuously across all 100 positions. The O(n) growth of match/case is unambiguous (R² = 0.986, slope = 0.016 µs/step, p = 1.09e⁻⁹²). BehaviorEnum and Dict are flat throughout (slope ≈ 0, p > 0.25 for both).

At the extremes: match/case costs 0.038 µs at position 1 and 1.542 µs at position 100 - a 40× increase. BehaviorEnum stays at ~0.035–0.048 µs regardless of position. The ~9% mean advantage of BehaviorEnum over Dict (p = 1.17e⁻¹⁴⁸) reflects that getattr + .do() is cheaper than a dict key lookup and call. The variance of match/case across all positions and repeats is also far wider - its cost is unpredictable in a way that BehaviorEnum and Dict are not.

The key finding is not the absolute speed difference but the position-dependence of match/case: real-world dispatch cost varies based on where a member happens to fall in the enum definition. BehaviorEnum is O(1) and insensitive to enum size or member ordering.

Questions for the community

- Is

dothe right attribute name, or would something likebehavior,handler, orfnbe clearer? - Should the callable validation in

__new__be opt-out (a class variable flag) for cases where users want to store a non-callable alongside the value? - Is there appetite for this in the stdlib, or is

aenumstill the right home?